Weak Supervision for Intent Classification

Weak Supervision for Intent Classification

The process of categorising a user’s message to the intent behind the text is called Intent Classification. In supervised machine learning, intent classification is done by taking a large corpus of text data (preferably user data) and manually labelling it against target intents, for instance, Refunds, Returns, Exchange, etc. Then, a machine-learning algorithm uses the features of the text data to predict an intent from the aforementioned target list of intents. Here, the manually labelled (called ground truth) intents are key to helping the algorithm learn from its mistakes and improving the model’s overall performance.

At Verloop.io, we wanted to build an Intent Classification system where we classify the user message to one of the many pre-built intents. The aim of this system was to allow our clients to set up and go live effortlessly without the hassle of collecting data or training models. The constraints of the system were that it had to:

1. Be highly accurate

2. Have the ability to generalise across domains (Eg: E-commerce, Banking, etc.)

We weren’t trying to solve all the needs of the clients but instead chose to focus on the most common questions/queries/complaints uttered by users across clients. One of the biggest bottlenecks in this entire process was obtaining labelled data. We had collected around 10 million unlabelled user queries from the e-commerce space and manually labelling all of them was beyond our scope. To tackle this, we used Snorkel to implement weak supervision.

What is weak supervision?

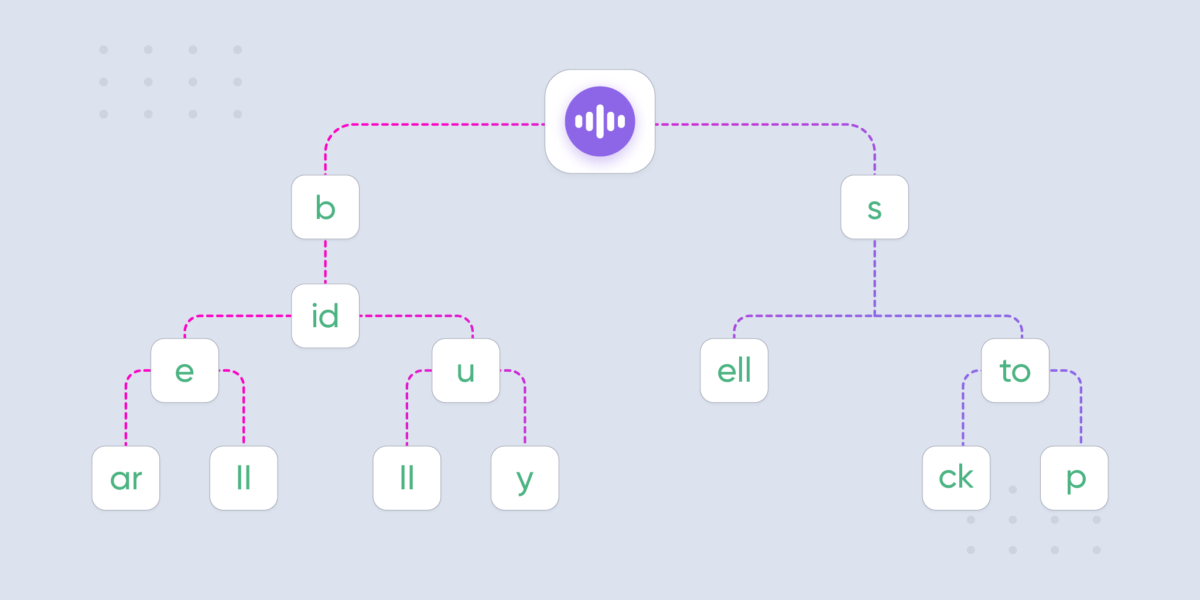

Weak supervision is a process in which we combine multiple noisy heuristic methods such as pattern matching, rule-based or other systems from Subject Matter Experts (SME) to obtain probabilistic labels.

This process is generally split into 3 steps:

- Applying labelling functions to unlabelled data

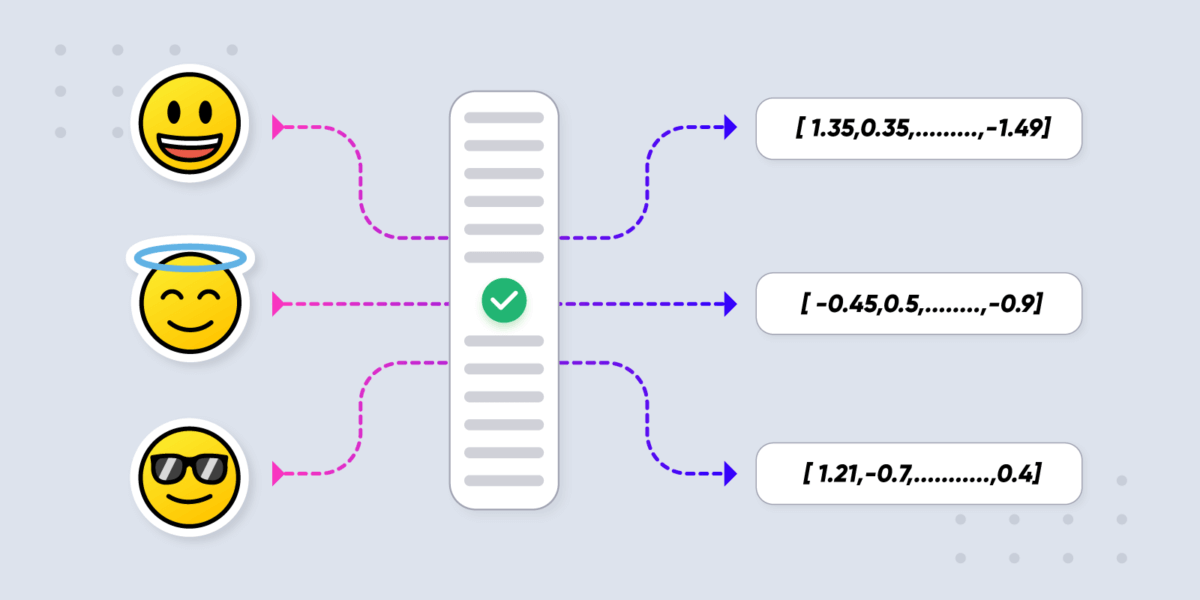

- Using a generative model to learn the accuracies of the labelling functions without any labelled data, and weight their outputs accordingly.

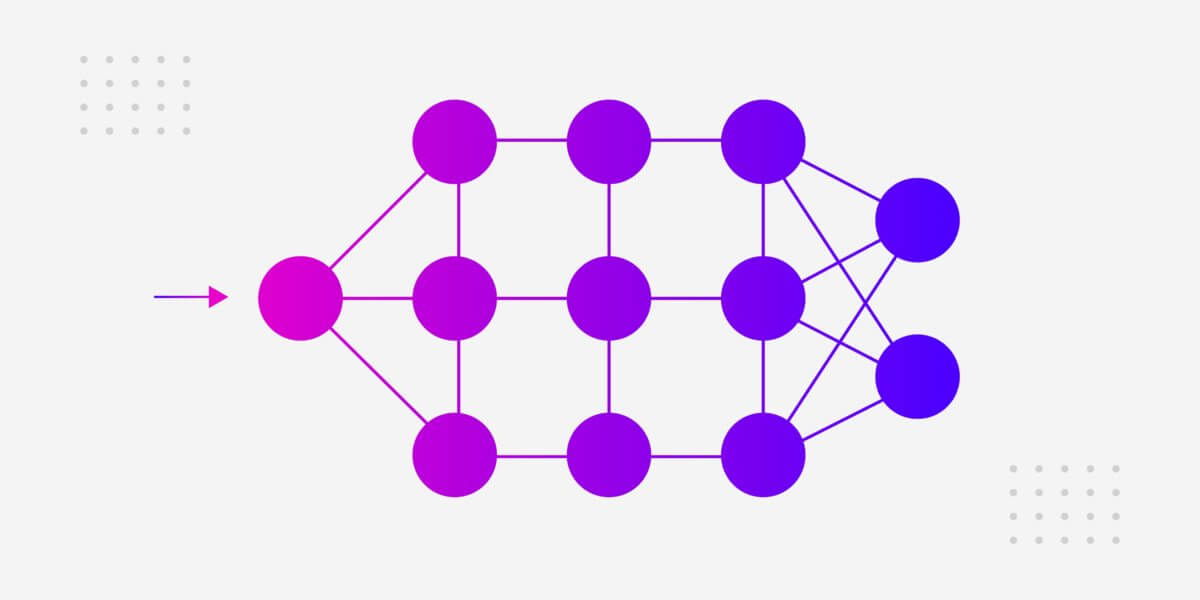

- Using the outputs that are nothing but the probabilistic labels to train a discriminative model that will be relatively unaffected from the noise in the signal expressed (and will generalise better) in the labelling functions.

Labelling functions (LFs)

According to Snorkel, “LFs are heuristics that take as input a data point and either assign a label to it or abstain (don’t assign any label). Labelling functions can be noisy: they don’t have perfect accuracy and don’t have to label every data point. Moreover, different labelling functions can overlap (label the same data point) and even conflict (assign different labels to the same data point).”

These labelling functions need not only be limited to programmatic heuristics like keyword searching, pattern matching but can also include third party models.

Generative model

The generative model is used to unify the variety of noisy labels, learn the joint distribution and return probabilistic labels. One crucial detail here is that the generative model is built without needing any “ground truth” labels. Behind the scenes, this was overcome by analysing the covariance matrix of the junction tree of a given LF dependency graph by using a matrix completion style algorithm. It can also automatically detect correlations and other dependencies among these labelling functions to correct any skewed accuracies.

Application

For our first use case, we decided to target only the e-commerce domain. We started by discovering the common intents by means of unsupervised learning on our corpus and basing it on external market research. Once we finalised the target list of intents, then came the task of labelling them using Snorkel.

We wrote multiple labelling functions for each discovered intent by going through their clusters in the data, identifying patterns and heuristics. Using Snorkel we successfully generated labelled data for 53 intents in that domain.

This helped us to create one of the finest pre-built, plug and play, intent classification systems with 99% accuracy and which covers over half of all user queries.

Currently, our work continues in expanding this to multiple domains and languages.